Over the course of the past two semesters, 55 Texas ECE students worked on their Capstone Design projects and this week they competed in the Fall 2020 Capstone Design Contest. Winners were announced during the Texas ECE Honors Virtual Celebration on Tuesday, December 8, 2020.

In the courses, students solve open-ended problems in small groups over two semesters to identify an opportunity, define the problem, analyze competing needs and requirements, perform prior art and patent searches, develop alternative designs, carry out cost analyses, and select and implement a design solution.

Winning teams were selected in two categories: Honors and Industry.

All project videos can be seen here.

First Place: Industry

Smart Irrigation System

Team:

Victoria Isom, Marwan Madi, Kevin Medina, Nitya Nandagopal, Joseph Onofre, Nino Teruya

Advisor:

Markus Hogue

Description:

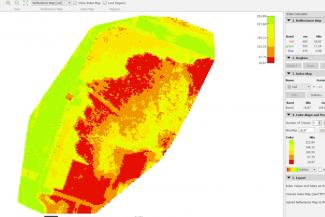

The UT Smart Irrigation System aims to increase the efficiency and reduce the cost of the irrigation system at UT Austin while conserving water. The current prototype integrates three components: images acquired from a UAV, soil sensors, and a comprehensive software package that can view irrigation recommendations geographically. The images were collected from a drone using a Normalized Differential Vegetation Index (NDVI) sensor which shows the strain on the grass. When this data is combined with the moisture-level and temperature readings from in-ground soil sensors, a holistic picture of grass health can be analyzed. Ultimately, the system produced by the team presents a way for the user to view and integrate important pieces of data together on a dashboard while providing an automated recommendation that keeps the grass alive using as little water as possible.

First Place: Honors

An Unbiased Machine Learning Framework

Team:

Nathaniel Jackson, Utsha Khondkar, Ali Mansoorshahi, Zhendong Qian, Muhammed Mohaimin Sadiq, Allen Zhang

Advisor:

Dr. Joydeep Ghosh

Description:

It might be hard to imagine how decisions made by an indifferent machine could ever be racist or sexist. However, machine learning models trained on biased data often inherit human prejudices. As machine learning becomes more pervasive in our everyday lives, in areas such as the criminal justice system, hiring, banking, healthcare, etc., it is important to ensure that decision-making models are fair to everyone, regardless of their race, sex, age, or other legally protected attributes. To this end, we introduce our unbiased machine learning framework, a solution combining the domains of Explainable Artificial Intelligence (XAI) and Fair Machine Learning (FairML) to process biased models to make them more fair. We use the XAI techniques of Shapley Additive Explanations (SHAP), Burden, and Leave-One-Covariate-Out (LOCO) to detect bias in models, and then leverage the same explanations to mitigate that bias using a variant of a method called Derived Predictors from the FairML domain.